Due: Tuesday Feb 12, 11:59PM EST

100 points total

Overview

In this assignment you will write a parallel renderer in CUDA that draws colored circles. While this renderer is very simple, parallelizing the renderer will require you to design and implement data structures that can be efficiently constructed and manipulated in parallel. This is a challenging assignment so you are advised to start early. Seriously, you are advised to start early. Good luck!

Environment Setup

This assignment requires an NVIDIA GPU with CUDA compute capability 2.0. The following lab machines have adequate GPUs:

- The machines in GHC 5205 (

ghc52-81.ghc.andrew.cmu.edu) have NVIDIA GTX 480 GPUs. The capabilities of this chip were described in lecture. Also, Table F.1 in the CUDA C Programming Guide is a handy reference for the maximum number of CUDA threads per thread block, size of thread block, shared memory, etc. The GTX 480 GPUs support CUDA compute capability 2.0. - NVIDIA GTX 680 GPUs (compute capability 3.0) are installed in

ghc13-14.ghc.andrew.cmu.edu. (These machines are physically in GHC 5201.) These are the fastest GPUs we have on campus. ghc02-12.ghc.andrew.cmu.educontain NVIDIA GTX 670 GPUs (compute capability 3.0)ghc01.ghc.andrew.cmu.educontains a NVIDIA GTX 650 GPU (compute capability 3.0).

To get started:

- The NVIDIA CUDA C/C++ Compiler (NVCC) is located at

/usr/local/cuda/bin/, which you need to add to yourPATH.1 - The CUDA shared library will be loaded at runtime. It is located at

/usr/local/cuda/lib64/, which you need to add to yourLD_LIBRARY_PATH.2 - Download the Assignment 2 starter code from the course directory:

/afs/cs.cmu.edu/academic/class/15418-s13/assignments/asst2.tgz

The CUDA C programmer's guide is an excellent reference for learning how to program in CUDA.

You can also find a large number of examples in the CUDA SDK /usr/local/cuda/C/src. In addition, there are a wealth

of CUDA tutorials and SDK examples on the web and on the NVIDIA developer site.

Assignment

Part 1: CUDA Warm-Up (5 pts)

To gain a bit of practice writing CUDA programs your warm-up task is to re-implement the SAXPY function

from assignment 1 in CUDA. Starter code for this part of the assignment is located in the /saxpy directory

of the assignment tarball.

Please finish off the implementation of SAXPY in the function saxpyCuda in saxpy.cu. You will need to

allocate device global memory arrays and copy the contents of the host input arrays X, Y, and result into

CUDA device memory prior to performing the computation. Conversely, after the CUDA computation is

complete, the result must be copied back into host memory. Please see the definition of cudaMemcpy function

in Section 3.2.2 of the Programmer's Guide.

As part of your implementation, add timers around the CUDA kernel invocation in saxpyCuda. Your

additional timing measurement should not include the time to transfer data to and from device memory

(just the time to execute the computation). Note that the call to cudaThreadSynchronize following the

kernel call waits for completion of all CUDA work since the kernel launch is asynchronous with the main

application thread.

Question 1. What performance do you observe compared to the sequential CPU-based implementation of SAXPY (recall program 3 from Assignment 1)? Compare and explain the difference between the results provided by two sets of timers (the timer you added and the timer that was already in the provided starter code). Are the bandwidth values observed roughly consistent with the reported bandwidths available to the different components of the machine?

Part 2: A Simple Circle Renderer (95 pts)

The directory /render of the assignment starter code contains an implementation of renderer that draws colored

circles. Build the code, and run the render with the following command line: ./render rgb. You will see

an image of three circles appear on screen ('q' closes the window). Now run the renderer with the command

line ./render snow. You should see an animation of falling snow.

The assignment starter code contains two versions of the renderer: a sequential, single-threaded C++

reference implementation, implemented in refRenderer.cpp, and an incorrect parallel CUDA implementation in

cudaRenderer.cpp.

Renderer Overview

We encourage you to familiarize yourself with the structure of the renderer codebase by inspecting the reference

implementation in refRenderer.cpp. The method setup is called prior to rendering the first frame. In your CUDA-accelerated

renderer, this method will likely contain all your renderer initialization code (allocating buffers, etc). render

is called each frame and is responsible for drawing all circles into the output image. The other main function of

the renderer, advanceParticles, is also invoked once per frame. It updates circle positions and velocities.

You will not need to modify advanceParticles in this assignment.

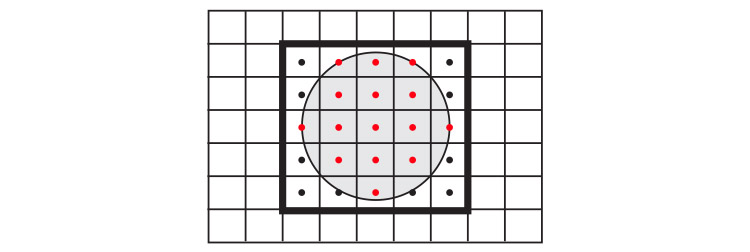

The renderer accepts an array of circles (3D position, velocity, radius, color) as input. The basic sequential algorithm for rendering each frame is:

Clear image

for each circle

update position and velocity

for each circle

compute screen bounding box

for all pixels in bounding box

compute pixel center point

if center point is within the circle

compute color of circle at point

blend contribution of circle into image for this pixel

Figure 2 illustrates the basic algorithm for computing circle-pixel coverage using point-in-circle tests. Notice that a circle contributes color to an output pixel only if the pixel's center lies within the circle.

CUDA Renderer

After familiarizing yourself with the circle rendering algorithm as implemented in the reference code, now

study the CUDA implementation of the renderer provided in cudaRenderer.cu. You can run the CUDA

implementation of the renderer using the --renderer cuda program option.

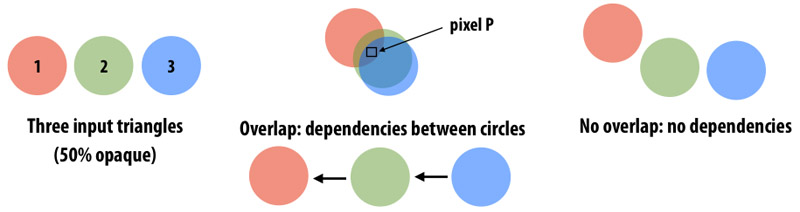

The provided CUDA implementation parallelizes computation across all input circles, assigning one circle to each CUDA thread. While this CUDA implementation is a complete implementation of the mathematics of a circle renderer, it contains several major errors that you will fix in this assignment. Specifically: the current implementation does not ensure image update is an atomic operation and it does not preserve the required order of image updates (the ordering requirement will be described below).

Renderer Requirements

Your parallel CUDA renderer implementation must maintain two invariants that are preserved trivially in the sequential implementation.

- Atomicity: All image update operations must be atomic. The critical region includes reading the four 32-bit floating-point values (the pixel's rgba color), blending the contribution of the current circle with the current image value, and then writing the pixel's color back to memory.

- Order: Your renderer must perform updates to an image pixel in circle input order. That is, if circle 1 and circle 2 both contribute to pixel P, any image updates to P due to circle 1 must be applied to the image before updates to P due to circle 2. As discussed in class, preserving the ordering requirement allows for correct rendering of transparent surfaces. (It has a number of other benefits for graphics systems. If curious, talk to Kayvon.) A key observation is that the definition of order only specifies the order of updates to the same pixel. Thus, as shown in Figure 3, there are no ordering requirements between circles that do not contribute to the same pixel. These circles can be processed independently.

Since the provided CUDA implementation does not satisfy these requirements, the result of not correctly respecting order can be seen by running the CUDA renderer implementation on the rgb and circles scenes. You will see horizontal streaks through the resulting images. These streaks will change with each frame.

What You Need To Do

Your job is to write the fastest, correct CUDA renderer implementation you can. You may take any approach you see fit, but your renderer must adhere to the atomicity and order requirements specified above. A solution that does not meet both requirements will be given no more than 10 points on part 2 of the assignment. We have already given you such a solution!

A good place to start would be to read through cudaRenderer.cu and convince yourself that it does not meet the correctness

requirement. To visually see the effect of violation of above two requirements, compile the program with make.

Then run ./render -r cuda rgb which should display the three circles image. Horizontal streaks through the generated image

show the incorrect behavior. Compare this image with the image generated by sequential code by running

./render rgb.

Following are some of the options to ./render:

-b --bench START:END Benchmark mode, do not create display. Time frames from START to END -c --check Runs both sequential and cuda versions and checks correctness of cuda version -f --file FILENAME Dump frames in benchmark mode (FILENAME_xxxx.ppm) -r --renderer WHICH Select renderer: WHICH=ref or cuda -s --size INT Make rendered imagex pixels -? --help Prints information about switches mentioned here.

Checker code: To detect correctness of the program, render has a convenient --check option. This option runs the sequential version of the reference CPU renderer along with your CUDA renderer and then compares the resulting images to ensure correctness. The time taken by your CUDA renderer implementation is also printed.

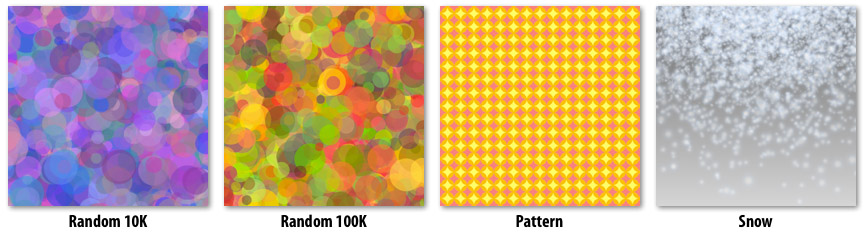

We provide are total of five circle datasets you will be graded on. To check the correctness and performance score of your code, run make check in the /render directory. If you run it on the starter code, the program will print a table like the following:

------------

Score table:

------------

-------------------------------------------------------------------------------------------

| Scene Name | Naive Time (Tn) | Fast Time (To) | Your Time (T) | Score |

-------------------------------------------------------------------------------------------

| rgb | 4.5 | 0.4 | 127.0251 (F) | 0 |

| rand10k | 230 | 11.03 | 68.5904 (F) | 0 |

| rand100k | 2305 | 113.87 | 751.5714 (F) | 0 |

| pattern | 27 | 0.88 | 4.1546 (F) | 0 |

| snowsingle | 2277 | 49.26 | 16.1984 (F) | 0 |

-------------------------------------------------------------------------------------------

| Total score: | 0/75 |

-------------------------------------------------------------------------------------------

In the above table, "naive time" is the performance of a very naive, but correct, CUDA solution for your current machine. "Fast time" is the performance of a good solution on your current machine. "Your time" is the performance of your current CUDA renderer solution. Your grade will depend on the performance of your implementation compared to these reference implementations (see Grading Guidelines). Note that the reference times are computed on an NVIDIA GTX 480 GPU, so you should compare your implementation's performance to the reference times only if it's run on a GTX 480.

Along with your code, we would like you to hand in a clear, high-level description of how your implementation works as well as a brief description of how you arrived at this solution. Specifically address approaches you tried along the way, and how you went about determining how to optimize your code (For example, what measurements did you perform to guide your optimization efforts?).

Aspects of your work that you should mention in the write-up include:

- Include both partners names and andrew id's at the top of your write-up.

- Replicate the score table generated for your solution.

- Describe how you decomposed the problem and how you assigned work to CUDA thread blocks and threads (and maybe even warps).

- Describe where synchronization occurs in your solution.

- What, if any, steps did you take to reduce communication requirements (e.g., synchronization or main memory bandwidth requirements)?

- Briefly describe how you arrived at your final solution. What other approaches did you try along the way. What was wrong with them?

Grading Guidelines

- The write-up for part 2 is worth 20 points.

Your implementation is worth 75 points. These are equally divided into 15 points per scene as follows:

- 2 correctness points per scene.

- 13 performance points per scene (only obtainable if the solution is correct). The score table has two reference times: Tn (Naive Time) and To (Good Time). These times give the performance of two functionally correct CUDA renderer solutions. One of them is very naive and other implements a reasonable set of optimizations.

- No performance points will be given for solutions having time (T) worse than Tn.

- Full performance points will be given for solution within 15% of optimal solution ( T < 1.15 * To )

For other values of T (for 1.15 To <= T < Tn), your performance score on scale 1 to 13 will be calculated as :

$Score = RoundUp \Big(\dfrac{T_o}{T} * 13\Big)$

Up to five points extra credit (instructor discretion) for solutions that achieve significantly greater performance than required. Your write-up must explain your approach thoroughly.

- Up to five points extra credit (instructor discretion) for a high-quality parallel CPU-only renderer implementation that achieves performance competitive to your CUDA renderer. Feel free to use any tools at your disposal (e.g., SIMD intrinsics, ISPC, pthreads). To receive credit you should analyze the performance of your GPU and CPU-based solutions and discuss the reasons for differences in implementation choices made.

Tips and Hints

Below are a set of tips and hints compiled from previous years. Note that there are various ways to implement your renderer and not all hints may apply to your approach.

- To facilitate remote development and benchmarking, we have created a

--benchmarkoption to the render program. This mode does not open a display, and instead runs the renderer for the specified number of frames. - When in benchmark mode, the

--fileoption sets the base file name for PPM images created at each frame. Created files are basenamexxxx.ppm. No PPM files are created if the--fileoption is not used. - There are two potential axes of parallelism in this assignment. One axis is parallelism across pixels another is parallelism across circles (provided the ordering requirement is respected for overlapping circles).

- The prefix-sum operation provided in

exclusiveScan.cu_inlmay be valuable to you on this assignment (not all solutions may choose to use it). See the simple description of a prefix-sum here. We have provided an implementation of an exclusive prefix-sum on a power-of-two-sized array in shared memory. The provided code does not work on non-power-of-two inputs. - You are allowed to use the Thrust library if you so choose. Thrust is not necessary to achieve the performance of the optimized CUDA reference implementations.

- Is there significant data reuse in the renderer? What can be done to exploit this reuse?

- How will you ensure atomicity of image update since there is no CUDA language primitive that performs the logic of the image update operation atomically? Constructing a lock out of global memory atomic operations is one solution, but keep in mind that even if your image update is atomic, the updates must be performed in the required order. We suggest that you think about ensuring order in your parallel solution first, and only then consider the atomicity problem if it still exists in your solution.

- If you are having difficulty debugging your CUDA code, you can use

printfdirectly from device code if you use a sufficiently new GPU and CUDA library: see this brief guide by one of your classmates. - If you find yourself with free time, have fun making your own scenes. A fireworks demo is just asking to be made!

3.4 Hand-in Instructions

Hand-in directories have been created at:

/afs/cs.cmu.edu/academic/class/15418-s13/handin/asst2/<ANDREW ID>/

Remember: You need to execute aklog cs.cmu.edu to avoid permission issues.

- Please submit your writeup as the file

writeup.pdf. - Please submit your code in the file

code.tgz. This tarball should contain those files fromasst2_srcdirectories which you changed. To keep submission sizes small, please do amake cleanprior to creating the archive, and remove any residual output images, etc. Before submitting the source files, make sure that all code is compilable and runnable! We should be able to simply untarcode.tgz, make, then execute your programs without manual intervention. You can easily compare your directory with source directoy by adiff -rcommand.

Our grading scripts will rerun the checker code allowing us to verify your score matches what you submitted in the writeup.pdf. We might also try to run your code on other datasets to further examine its correctness.

-

To add

/usr/local/cuda/binto your path, executesetenv PATH /usr/local/cuda/bin:${PATH}for csh orexport PATH=/usr/local/cuda/bin:${PATH}in bash. ↩ -

Execute

setenv LD_LIBRARY_PATH ${LD_LIBRARY_PATH}:/usr/local/cuda/lib64/for csh orexport LD_LIBRARY_PATH=/usr/local/cuda/lib64/:${LD_LIBRARY_PATH}for bash. ↩