Due: Tuesday Jan 28th, 11:59PM EST

70 points total + 5 points extra credit

Overview

This assignment is intended to help you develop an understanding of the two primary forms of parallel execution present in a modern multi-core CPU:

- SIMD execution within a single processing core

- Parallel execution using multiple cores

You will also gain experience measuring and reasoning about the performance of parallel programs (a challenging, but important, skill you will use throughout this class). This assignment involves only a small amount of programming, but a lot of analysis!

Environment Setup

You will need to run code on the machines in GHC 5201/5205 for this assignment.

(Hostnames for these machines are ghc01-26.ghc.andrew.cmu.edu and

ghc51-81.ghc.andrew.cmu.edu respectively.)

These machines contain quad-core 2.6 GHz Intel Xeon processors1. Each core

core in the Xeon processor can execute SSE4 instructions which describe

simultaneous execution of the same operation on four single-precision data

values. For the curious, a complete specication for this CPU can be found at

http://ark.intel.com/products/39718/Intel-Xeon-Processor-W3520.

Note: We will grade your analysis of code run on the 5201/5205 machines, however

for kicks, you may also want to run these programs on the six-core machines in GHC 30002 (ghc27-50.ghc.andrew.cmu.edu) or your own machine. (You will first need to install the Intel SPMD Program Compiler (ISPC) available

here: http://ispc.github.com/). Those of you with personal machines that support Intel's new AVX

instructions should be able to observe significant speedups. Further, running

these programs on a CPU with hyper-threading enabled can give some interesting

results. Feel free to include your findings from running code on other machines in your report as well, just be very clear what machine you were running on.

To get started:

- ISPC is needed to compile many of the programs used in this assignment. ISPC is currently installed on the Gates 5201 and 5205 lab machines in the directory

/usr/local/ispc. You will need to add this directory to your system path. - Download the Assignment 1 starter code from the course directory:

/afs/cs.cmu.edu/academic/class/15418-s14/assignments/asst1.tgz

Program 1: Parallel Fractal Generation Using Pthreads (15 points)

Build and run the code in the prog1_mandelbrot_threads/ directory of the

Assignment 1 code base. This program produces the image file

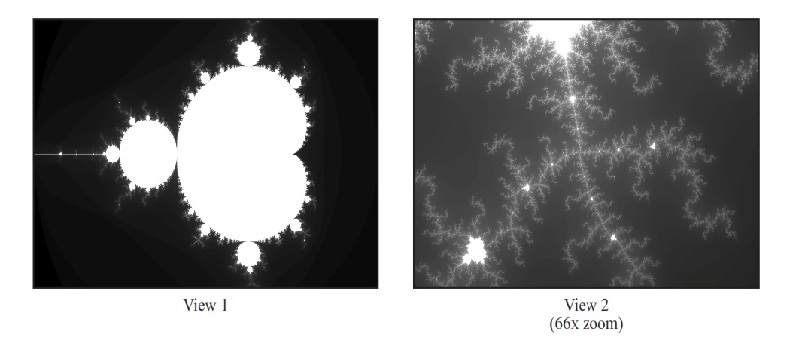

mandelbrot-serial.ppm3, which is a visualization of a famous set of complex

numbers called the Mandelbrot set. As you can see in the images below, the result is a familiar and beautiful fractal.

Each pixel in the image corresponds to a value in the complex plane, and the

brightness of each pixel is proportional to the computational cost of

determining whether the value is contained in the Mandelbrot set. To get image 2,

use the command option --view 2.

(See function mandelbrotSerial() defined in mandelbrotSerial.cpp). You can learn more

about the definition of the Mandelbrot set at

http://en.wikipedia.org/wiki/Mandelbrot_set.

Your job is to parallelize the computation of the images using pthreads. Starter

code that spawns one additional thread is provided in the function

mandelbrotThread() located in mandelbrotThread.cpp. In this function, the

main application thread creates another additional pthread using

pthread_create. It waits for this thread to complete using pthread_join.

Currently the launched thread does not do any computation and returns immediately.

You should add code to workerThreadStart function to accomplish this task.

You will not need to make use of any other pthread API calls in this assignment.

What you need to do:

- Modify the starter code to parallelize the Mandelbrot generation using two processors. Specifically, compute the top half of the image in thread 0, and the bottom half of the image in thread 1. This type of problem decomposition is referred to as spatial decomposition since different spatial regions of the image are computed by different processors.

- Extend your code to utilize 2, 3, and 4 threads, partitioning the image generation work accordingly. In your write-up, produce a graph of speedup compared to the reference sequential implementation as a function of the number of cores used. Is speedup linear in the number of cores used? In your writeup hypothesize why this is (or is not) the case?

- To confirm (or disprove) your hypothesis, measure the amount of time

each thread requires to complete its work by inserting timing code at

the beginning and end of

workerThreadStart(). How do your measurements explain the speedup graph you previously created? - Modify the mapping of work to threads to achieve to improve speedup to at least 3.5x on both views of the Mandelbrot set. You may not use any synchronization between threads. We are expecting you to come up with a single work decomposition policy that will work well for all thread counts---no hard coding a solution specific to each configuration. (Hint: There is a very simple static assignment that will achieve this goal, and no communication/synchronization among threads is necessary.). In your writeup, describe your approach and report the final 4-thread speedup obtained.

Program 2: Vectorizing Code Using SIMD Intrinsics (20 points)

Take a look at the function clampedExpSerial in prog2_vecintrin/main.cpp of the

Assignment 1 code base. The clampedExp() function raises values[i] to the power given by exponents[i] for all elements of the input array and clamps the resulting values at 9.999999. In program 2, your job is to vectorize this piece of code so it can be run on a machine with SIMD vector instructions.

However, rather than craft an implementation using SSE or AVX vector instrinsics that map to real vector instructions on moderns CPUs, to make things a little easier, we're asking you to implement your version using 15-418's "fake vector instrinics" defined in CMU418intrin.h. The CMU418intrin.h library provides you with a set of vector instructions that operate

on vector values and/or vector masks. (These functions don't translate to real CMU vector instructions, instead we simulate these operations for you in our library, and provide feedback that makes for easier debugging.) As an example of using the 15-418 intrinsics, a vectorized version of the abs() function is given in main.cpp. This example contains some basic vector loads and stores and manipulates mask registers. Note that the abs() example is only a simple example, and in fact the code does not correctly handle all inputs! (We will let you figure out why!) You may wish to read through all the comments and function definitions in CMU418intrin.h to know what operations are available to you.

Here are few hints to help you in your implementation:

- Every vector instruction is subject to an optional mask parameter. The mask parameter defines which lanes whose output is "masked" for this operation. A 0 in the mask indicates a lane is masked, and so its value will not be overwritten by the results of the vector operation. If no mask is specified in the operation, no lanes are masked. (Note this equivalent to providing a mask of all ones.) Hint: Your solution will need to use multiple mask registers and various mask operations provided in the library.

- Hint: Use

_cmu418_cntbitsfunction helpful in this problem. - Consider what might happen if the total number of loop iterations is not a multiple of SIMD vector width. We suggest you test

your code with

./myexp -s 3. Hint: You might find_cmu418_init_oneshelpful. - Hint: Use

./myexp -lto print a log of executed vector instruction at the end. Use functionaddUserLog()to add customized debug information in log. Feel free to add additionalCMU418Logger.printLog()to help you debug.

The output of the program will tell you if your implementation generates correct output. If there are incorrect results, the program will print the first one it finds and print out a table of function inputs and outputs. Your function's output is after "output = ", which should match with the results after "gold = ". The program also prints out a list of statistics describing utilization of the 15418 fake vector units. You should consider the performance of your implementation to be the value "Total Vector Instructions". (You can assume every 15-418 fake vector instruction takes one cycle on the 15-418 fake SIMD CPU.) "Vector Utilization" shows the percentage of vector lanes that are enabled.

What you need to do:

- Implement a vectorized version of

clampedExpSerialinclampedExpVector. Your implementation should work with any combination of input array size (N) and vector width (VECTOR_WIDTH). - Run

./myexp -s 10000and sweep the vector width from 2, 4, 8, to 16. Record the resulting vector utilization. You can do this by changing the#define VECTOR_WIDTHvalue inCMU418intrin.h. Does the vector utilization increase, decrease or stay the same asVECTOR_WIDTHchanges? Why?

Program 3: Parallel Fractal Generation Using ISPC (15 points)

Now that you're comfortable with SIMD execution, we'll return to parallel Mandelbrot fractal generation (like in program 1). Like Program 1, Program 3 computes a mandelbrot fractal image, but it achieves even greater speedups by utilizing both the Xeon CPUs four cores and the SIMD execution units within each core.

In Program 1, you parallelized image generation by creating one thread for each processing core in the system. Then, you assigned parts of the computation to each of these concurrently executing threads.4 Instead of specifying a specific mapping of computations to concurrently executing threads, Program 3 uses ISPC language constructs to describe independent computations. These computations may be executed in parallel without violating program correctness (and indeed they will!). In the case of the Mandelbrot image, computing the value of each pixel is an independent computation. With this information, the ISPC compiler and runtime system take on the responsibility of generating a program that utilizes the CPUs collection of parallel execution resources as efficiently as possible.

You will make a simple fix to Program 3 which is written in a combination of

C++ and ISPC (the error causes a performance problem, not a correctness one).

With the correct fix, you should observe performance that is over ten times

greater than that of the original sequential Mandelbrot implementation from

mandelbrotSerial().

Program 3, Part 1. A Few ISPC Basics (7 of 15 points)

When reading ISPC code, you must keep in mind that although the code appears

much like C/C++ code, the ISPC execution model differs from that of standard

C/C++. In contrast to C, multiple program instances of an ISPC program are

always executed in parallel on the CPU's SIMD execution units. The number of

program instances executed simultaneously is determined by the compiler (and

chosen specifically for the underlying machine). This number of concurrent

instances is available to the ISPC programmer via the built-in variable

programCount. ISPC code can reference its own program instance identifier via

the built-in programIndex. Thus, a call from C code to an ISPC function can

be thought of as spawning a group of concurrent ISPC program instances

(referred to in the ISPC documentation as a gang). The gang of instances

runs to completion, then control returns back to the calling C code.

Stop. This is your friendly instructor. Please read the preceeding paragraph again. Trust me.

As an example, the following program uses a combination of regular C code and ISPC code to add two 1024-element vectors. As we discussed in class, since each instance in a gang is independent and performing the exact same program logic, execution can be accelerated via implementation using SIMD instructions.

A simple ISPC program is given below. The following C code will call the following ISPC code:

------------------------------------------------------------------------

C program code: myprogram.cpp

------------------------------------------------------------------------

const int TOTAL_VALUES = 1024;

float a[TOTAL_VALUES];

float b[TOTAL_VALUES];

float c[TOTAL_VALUES]

// Initialize arrays a and b here.

sum(TOTAL_VALUES, a, b, c);

// Upon return from sumArrays, result of a + b is stored in c.

The corresponding ISPC code:

------------------------------------------------------------------------

ISPC code: myprogram.ispc

------------------------------------------------------------------------

export sum(uniform int N, uniform float* a, uniform float* b, uniform float* c)

{

// Assumption programCount divides N evenly.

for (int i=0; i<N; i+=programCount)

{

c[programIndex + i] = a[programIndex + i] + b[programIndex + i];

}

}

The ISPC program code above interleaves the processing of array elements among program instances. Note the similarity to Program 1, where you statically assigned parts of the image to threads.

However, rather than thinking about how to divide work among program instances

(that is, how work is mapped to execution units), it is often more convenient,

and more powerful, to instead focus only on the partitioning of a problem into

independent parts. ISPCs foreach construct provides a mechanism to express

problem decomposition. Below, the foreach loop in the ISPC function sum2

defines an iteration space where all iterations are independent and therefore

can be carried out in any order. ISPC handles the assignment of loop iterations

to concurrent program instances. The difference between sum and sum2 below

is subtle, but very important. sum is imperative: it describes how to

map work to concurrent instances. The example below is declarative: it

specifies only the set of work to be performed.

-------------------------------------------------------------------------

ISPC code:

-------------------------------------------------------------------------

export sum2(uniform int N, uniform float* a, uniform float* b, uniform float* c)

{

foreach (i = 0 ... N)

{

c[i] = a[i] + b[i];

}

}

Before proceeding, you are encouraged to familiarize yourself with ISPC

language constructs by reading through the ISPC walkthrough available at

http://ispc.github.com/example.html. The example program in the walkthrough

is almost exactly the same as Program 3's implementation of mandelbrot_ispc()

in mandelbrot.ispc. In the assignment code, we have changed the bounds of

the foreach loop to yield a more straightforward implementation.

What you need to do:

Compile and run the program mandelbrot ispc. What is the maximum speedup you expect given what you know about the Xeon CPUs in 5201/5205? Why might the number you observe be less than this ideal? (Hint: Consider the characteristics of the computation you are performing? Describe the parts of the image that present challenges for SIMD execution? Comparing the performance of rendering the different views of the Mandelbrot set may help confirm your hypothesis.)

We remind you that for the code described in this subsection, the ISPC compiler maps gangs of program instances to SIMD instructions executed on a single core. This parallelization scheme differs from that of Program 1, where speedup was achieved by running threads on multiple cores.

Program 3, Part 2: ISPC Tasks (8 of 15 points)

ISPCs SPMD execution model and mechanisms like foreach facilitate the creation

of programs that utilize SIMD processing. The language also provides an additional mechanism utilizing multiple cores in an ISPC computation. This mechanism is launching ISPC tasks.

See the launch[2] command in the function mandelbrot_ispc_withtasks. This

command launches two tasks. Each task defines a computation that will be

executed by a gang of ISPC program instances. As given by the function

mandelbrot_ispc_task, each task computes a region of the final image. Similar

to how the foreach construct defines loop iterations that can be carried out

in any order (and in parallel by ISPC program instances, the tasks created by

this launch operation can be processed in any order (and in parallel on

different CPU cores).

What you need to do:

- Run

mandelbrot_ispcwith the parameter--tasks. What speedup do you observe? What is the speedup over the version ofmandelbrot_ispcthat does not partition that computation into tasks? - There is a simple way to improve the performance of

mandelbrot_ispc --tasksby changing the number of tasks the code creates. By only changing code in the functionmandelbrot_ispc_withtasks(), you should be able to achieve performance that exceeds the sequential version of the code by almost ten times! How did you determine how many tasks to create? Why does the number you chose work best? - Extra Credit: (2 points) What are differences between the pthread abstraction (used in Program 1) and the ISPC task abstraction? There are some obvious differences in semantics between the (create/join and (launch/sync) mechanisms, but the implications of these differences are more subtle. Here's a thought experiment to guide your answer: what happens when you launch 10,000 ISPC tasks? What happens when you launch 10,000 pthreads?

The smart-thinking student's question: Hey wait! Why are there two different

mechanisms (foreach and launch) for expressing independent, parallelizable

work to the ISPC system? Couldn't the system just partition the many iterations

of foreach across all cores and also emit the appropriate SIMD code for the

cores?

Answer: Great question! And there are a lot of possible answers. Come to office hours.

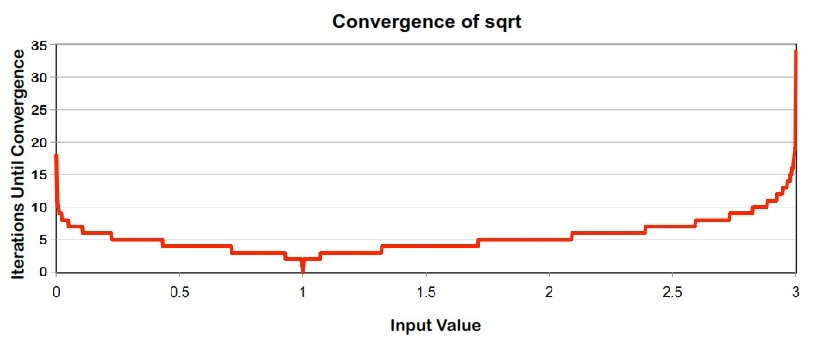

Program 4: Iterative sqrt (10 points)

Program 4 is an ISPC program that computes the square root of 20 million

random numbers between 0 and 3. It uses a fast, iterative implementation of

square root that uses Newton's method to solve the equation ${\frac{1}{x^2}} - S = 0$.

The value 1.0 is used as the initial guess in this implementation. The graph below shows the

number of iterations required for sqrt to converge to an accurate solution

for values in the (0-3) range. (The implementation does not converge for

inputs outside this range). Notice that the speed of convergence depends on the

accuracy of the initial guess.

What you need to do:

- Build and run

sqrt. Report the ISPC implementation speedup for single CPU core (no tasks) and when using all cores (with tasks). What is the speedup due to SIMD parallelization? What is the speedup due to multi-core parallelization? - Modify the contents of the array values to improve the relative speedup of the ISPC implementations. Describe a very-good-case input and report the resulting speedup achieved (for both the with and without tasks ISPC implementations). Does your modification improve SIMD speedup? Does it improve multi-core speedup? Explain why.

- Construct a worst-case input for

sqrt. Describe this input, describe why you chose it, and report the resulting relative performance of the ISPC implementations. What is the reason for the loss in efficiency? (keep in mind we are using the--target=sse4option for ISPC). - Extra Credit: (up to 3 points) Write your own version of the

sqrtfunction manually using SSE or AVX intrinsics. To get credit your implementation should be at least as fast (or faster) than the binary produced using ISPC. You may find the Intel Intrinsics Guide very helpful:

Program 5: BLAS saxpy (10 points)

Program 5 is an implementation of the saxpy routine in the BLAS (Basic Linear

Algebra Subproblems) library that is widely used (and heavily optimized) on

many systems. saxpy computes the simple operation C = scale*X+Y, where X, Y,

and C are vectors of $N$ elements (in Program 5, $N$ =20 million) and scale is a scalar. Note that

saxpy performs two math operations (one multiply, one add) for every three

elements used (two loads, one store). saxpy is a trivially parallelizable

computation and features predictable, regular data access and predictable

execution cost.

What you need to do:

- Compile and run

saxpy. The program will report the performance of sequential, ISPC, and ISPC (with tasks) implementations of saxpy. What speedup do you observe? Explain the performance of this program. Do you think it can be improved? - Extra Credit: Improve the performance of

saxpy. We're looking for significant speedup here, not just a few percentage points. If successful, describe how you did it (hint: the course staff will be very curious) and what a best-possible implementation on these systems might achieve.

Hand-in Instructions

Hand-in directories have been created at:

/afs/cs/academic/class/15418-s14-users/<ANDREW ID>/asst1

You may need to execute aklog cs.cmu.edu to avoid permission issues. We recommend you add this to your ~/.cshrc or ~/.bashrc file to avoid typing it every time.

Please place the following files in your handin directory:

- Your writeup, in a file called

writeup.pdf - Your implementation of

main.cppin Program 2, in a file calledprob2.cpp

If you would like to hand in code, for example, because you successfully completed an extra credit, you are free to include that as well in a single tar file. Please also tell the TAs to look for your extra credit. When handed in, all code must be compilable and runnable! We should be able to untar, make, and execute your programs without manual intervention.

Resources and Notes

- Extensive ISPC documentation and examples can be found at http://ispc.github.com/

- Specs for the Quad-Core Xeon CPUs in GHC 5201/5205 are available at: http://ark.intel.com/products/39718/Intel-Xeon-Processor-W3520

- Specs for the Six-Core Xeon CPUs in GHC 3000 are available at: http://ark.intel.com/products/47918/Intel-Xeon-Processor-W3670-12M-Cache-3_20-GHz-4_80-GTs-Intel-QPI

- If you wish to run these programs on an AVX-capable CPU, you will

need to tell ISPC to generate AVX, rather than SSE instructions. Please

see the Makefile comment about ISPCs

--target=avx-x2compiler flag. - Zooming into different locations of the mandelbrot image can be quite fascinating

- Intel provides a lot of supporting material about SSE and AVX instructions at http://software.intel.com/en-us/avx/.

- The Intel Intrinsics Guide is very useful.

-

Hyper-threading is disabled on GHC 5201/5205 machines so Linux reports them as having four virtual processing cores (not eight). ↩

-

Hyper-threading is enabled on GHC 3000 machines so Linux reports them as having twelve virtual processing cores. ↩

-

To view images remotely, use

ssh -Yand thedisplaycommand. ↩ -

Since pthreads were one-to-one with processing cores in Program 1, you effectively assigned work explicitly to cores. ↩

Correcting a mistake in "CMU418intrin.h" comments

The comments for the functions: _cmu418_vgt_float, _cmu418_vgt_int, _cmu418_vlt_float, _cmu418_vlt_int, _cmu418_veq_float, _cmu418_veq_int on line 94, 99, 104 of "CMU418intrin.h" do not quite reflect what the functions perform.

The comment should be: // otherwise keep the old value instead of: // otherwise return 1

All functions actually keeps the old value in vecResult if the mask disables the vector lane.

This comment was marked helpful 0 times.